UK organizations are rapidly deploying AI across every part of the enterprise.

Teams build AI applications across cloud platforms, SaaS tools, and internal systems. These systems rely on enterprise data to generate insights, automate decisions, and power innovation.

This shift introduces a new risk:

AI systems expose sensitive data when organizations lack control over the data that powers them.

Security and AI leaders now face critical questions:

- What data feeds our AI systems?

- Does that data contain regulated or sensitive information?

- Who can access AI training and retrieval data?

- How do we prevent data leakage in AI outputs?

AI governance starts with data governance.

Organizations that control their data control their AI risk.

At a Glance

• AI systems rely on enterprise data, which increases the risk of exposure.

• Uncontrolled training data and RAG pipelines introduce new security challenges.

• DSPM helps discover, classify, and govern data before it enters AI systems.

• Organizations reduce AI risk and build trusted, compliant AI systems.

Best for: AI leaders, CISOs, and data governance teams.

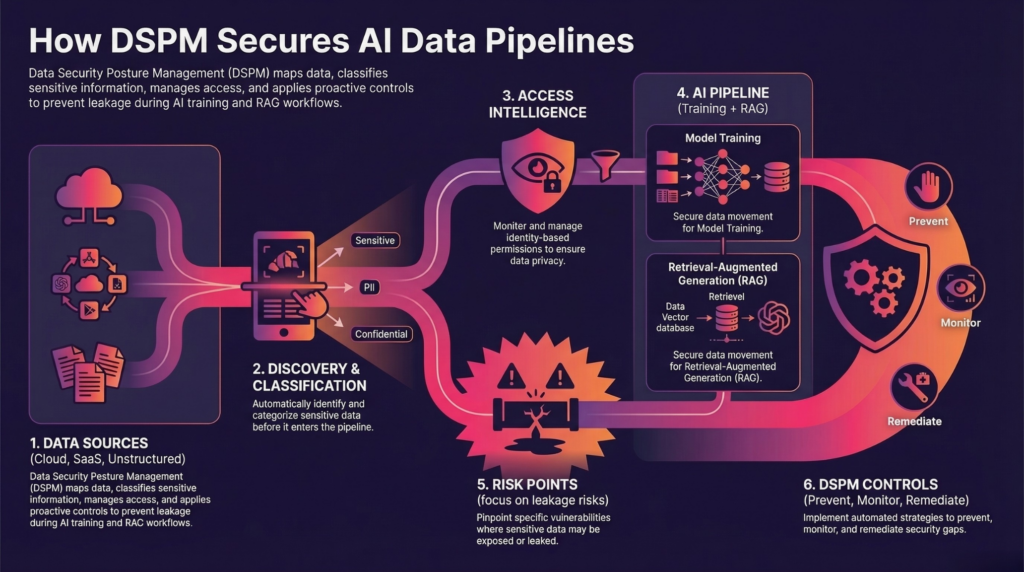

What Is DSPM for AI Data Governance?

Data Security Posture Management (DSPM) for AI helps organizations discover sensitive data, classify it, analyze access risk, and control how data flows into AI systems.

DSPM focuses on the data before, during, and after AI usage.

Unlike traditional security tools, DSPM shows what data exists, where it lives, and who can access it across AI pipelines.

Security teams gain the ability to:

- discover sensitive data across AI pipelines

- classify regulated and high-risk information

- analyze access to training and retrieval data

- prevent exposure in AI outputs

This creates a complete view of AI data risk across the enterprise.

The Hidden Risk in AI Data Pipelines

AI systems ingest data from multiple sources:

- cloud storage

- SaaS platforms

- internal systems

- data lakes

- unstructured repositories

This data often includes:

- personal data

- financial records

- intellectual property

- regulated information

Without governance, AI pipelines introduce risk at every stage:

- ingestion of sensitive data

- uncontrolled training datasets

- retrieval of confidential documents

- exposure through outputs

AI amplifies data risk at scale.

Governing AI Training Data

Training data defines how AI systems behave.

Many organizations build models using large, unfiltered datasets. These datasets often include sensitive or regulated information.

DSPM helps organizations:

- identify sensitive data in training datasets

- remove unnecessary or high-risk data

- enforce data minimization principles

- ensure only approved data enters training workflows

This reduces the risk of:

- embedding sensitive data into models

- violating UK GDPR requirements

- producing unsafe or non-compliant outputs

Better data leads to safer AI.

Securing RAG and Retrieval Systems

Retrieval-Augmented Generation (RAG) introduces a new category of risk.

RAG systems pull data dynamically from enterprise sources to generate responses.

If organizations do not control that data, AI systems can expose:

- confidential documents

- personal data

- internal communications

DSPM helps organizations:

- discover sensitive data in retrieval sources

- classify data used in RAG pipelines

- control which data AI systems can access

- enforce access policies at query time

This ensures AI systems retrieve only governed and appropriate data.

Preventing AI Data Leakage

AI systems can expose sensitive data in outputs.

This risk increases when:

- training data includes regulated information

- retrieval systems access unfiltered data

- access controls do not apply to AI workflows

DSPM reduces this risk by:

- identifying sensitive data before AI ingestion

- enforcing access controls across data sources

- monitoring data exposure risk

- enabling remediation before data reaches AI systems

You cannot fix AI output risk without controlling input data.

DSPM and UK Regulatory Requirements for AI

UK organizations must align AI usage with regulatory expectations.

Regulatory expectations will continue to evolve as AI adoption increases across the UK.

Frameworks such as UK GDPR and emerging AI regulations require:

- data minimization

- access control

- accountability

- risk reduction

DSPM enables organizations to:

- discover regulated data across AI pipelines

- classify sensitive information

- demonstrate control over data usage

- reduce exposure before it becomes a compliance issue

Security leaders can translate AI governance into measurable data controls.

Operationalizing AI Data Governance with DSPM

Effective AI data governance follows a structured approach:

Step 1: Discover Data Across AI Pipelines

Identify where AI systems source data.

Step 2: Classify Sensitive Data

Understand what data enters training and retrieval systems.

Step 3: Analyze Access

Determine who can access AI data and where risk exists.

Step 4: Remediate Risk

Remove sensitive data, restrict access, and enforce governance policies.

This approach allows organizations to control AI data risk at scale.

Why DSPM Is Critical for AI Security in the UK

AI adoption will continue to grow.

Data will continue to expand.

Risk will increase alongside both.

Organizations that fail to govern AI data will face:

- data leakage

- regulatory exposure

- loss of trust

Organizations that control their data will:

- reduce AI risk

- improve model performance

- build trusted AI systems

DSPM gives UK enterprises the foundation to secure AI at scale.

Frequently Asked Questions About DSPM and AI Data Governance

1. What is DSPM for AI data governance?

DSPM for AI data governance helps organizations discover sensitive data, classify it, analyze access risk, and control how data flows into AI systems. It ensures that AI models and pipelines only use governed, appropriate data.

2. Why is AI data governance important for UK organizations?

AI systems rely on large volumes of enterprise data. Without governance, sensitive or regulated data can enter AI pipelines and appear in outputs, creating security, compliance, and reputational risk.

3. How does DSPM help secure AI training data?

DSPM identifies sensitive data in training datasets, enables teams to remove high-risk or unnecessary data, and ensures only approved data enters model training processes.

4. What risks do AI pipelines introduce?

AI pipelines can expose sensitive data through unfiltered training datasets, uncontrolled retrieval systems, and outputs that surface confidential or regulated information.

5. How does DSPM reduce AI data leakage?

DSPM prevents data leakage by discovering sensitive data before ingestion, enforcing access controls, and reducing exposure across data sources used by AI systems.

6. What is RAG and why does it create data risk?

Retrieval-Augmented Generation (RAG) systems pull data from enterprise sources in real time. If organizations do not govern that data, users can access sensitive information through AI-generated responses.

7. How does DSPM support compliance for AI systems?

DSPM helps organizations meet regulatory requirements by enforcing data minimization, controlling access, and providing visibility into how data is used in AI systems.

8. What types of data should organizations govern before using AI?

Organizations should govern personal data, financial records, intellectual property, and any regulated or sensitive information before using it in AI training or retrieval systems.

9. How do organizations get started with AI data governance?

Organizations should begin by discovering sensitive data, classifying it with context, analyzing access, and applying controls before data enters AI pipelines.

10. Can DSPM improve AI performance?

Yes. AI systems perform better when they rely on clean, relevant, and governed data. Removing unnecessary or low-quality data improves accuracy and reliability.

11. How does DSPM fit into an existing AI or security stack?

DSPM integrates with existing security and governance tools by providing visibility into sensitive data. It complements controls like DLP and access governance by identifying where data risk exists and enabling teams to take action.

See Where Your AI Data Creates Risk

AI risk does not start in the model. It starts in the data.

Most organizations cannot see where sensitive data enters AI pipelines, who can access it, or how it flows across systems.

DSPM gives security and AI teams the ability to identify high-risk data, prioritize exposure, and take action before that data reaches AI systems.

That is how organizations move from reactive AI security to proactive data control.

Build AI on Data You Trust

AI systems are only as safe as the data behind them.

Security teams must govern data before AI uses it.

DSPM enables organizations to:

- discover sensitive data

- control access

- reduce exposure

- support compliant AI innovation

That is how organizations secure AI, reduce risk, and move faster with confidence.

See How BigID Helps You Discover, Control, and Secure AI Data at Scale.